Sean Zdenek, PhD

Access · Sound · Rhetoric · Captioning · Film/Media

Associate Professor of Technical and Professional Writing

University of Delaware

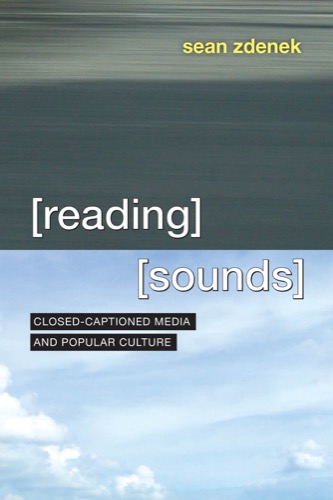

book

Reading Sounds: Closed-Captioned Media and Popular Culture

University of Chicago Press, 2015 · ReadingSounds.net

A rhetorical analysis of closed captioning in popular culture — exploring how captions construct meaning, represent sound, and shape our experience of media.

Winner: 2017 Best Book in Technical or Scientific Communication, CCCC/NCTE

View book at University of Chicago Press

biography

Dr. Sean Zdenek is an associate professor of technical and professional writing at the University of Delaware. His research and teaching interests include technical writing, disability studies, sound studies, and rhetorical theory. His book, Reading Sounds: Closed-Captioned Media and Popular Culture (UChicago, 2015), won the 2017 award for best book in technical or scientific communication from the Conference on College Composition and Communication (CCCC).

Sean grew up in California and currently lives in northeastern Maryland, a few miles from both Delaware and Pennsylvania.

selected publications

Zdenek, Sean (2020). Transforming Access and Inclusion in Composition Studies and Technical Communication. College English 82(5), 536–44.

Zdenek, Sean (2018). Guest Editor’s Introduction: Reimagining Disability and Accessibility in Technical and Professional Communication. Communication Design Quarterly 6(4), 4–11.

Zdenek, Sean (2018). Designing Captions: Disruptive Experiments with Typography, Color, Icons, and Effects. Kairos: A Journal of Rhetoric, Technology, and Pedagogy 23(1).

Zdenek, Sean (2011). Which Sounds Are Significant? Towards a Rhetoric of Closed Captioning. Disability Studies Quarterly 31(3).

Zdenek, Sean (2009). Accessible Podcasting: College Students on the Margins in the New Media Classroom. Computers & Composition Online. (PDF)

teaching

I teach a range of courses in writing studies. Recent and upcoming courses include:

- Synthetic rhetoric: Writing, designing, and creating with AI

- Unplugged: Reclaiming agency in an attention economy

- Technical writing

- Grant and proposal writing (graduate)

- Designing inclusive futures (graduate)

blog posts

Occasional writing on captioning, disability, film, and whatever Hypnotoad demands.

Browse all posts